Hello, WebGPU!

The Web offers several tools for creating visual elements, such as bitmap images, stylesheets, vector graphics, and the <canvas> element.

These solutions, however, only partially leverage the capabilities of graphics cards (or GPUs).

Among the modern APIs for direct GPU access are Direct3D 12 for Windows, Metal for macOS, and Vulkan for Linux and other platforms. These APIs, however, are not cross-platform, being closely tied to their respective environments.

WebGPU architecture

WebGPU is a new specification designed to be cross-platform, supported by implementations such as Dawn (Google), wgpu (Mozilla) and WebKit (Apple). These implementations rely on native backends, namely Direct3D 12, Metal, and Vulkan, to provide uniform and performant GPU access, regardless of the operating system.

In the past, WebGL, in versions 1 and 2, marked a first step toward more direct GPU access, following an approach similar to desktop OpenGL. WebGL 2, in particular, maintained portability through the use of OpenGL ES, a widely supported and cross-platform API. However, the OpenGL ES standard that WebGL is based on is no longer evolving, making it less suitable for leveraging the capabilities of modern GPUs.

In the meantime, GPUs have evolved into General Purpose GPUs (GPGPUs), expanding their use beyond graphics, for example in training machine learning models. In this context, WebGL 2 does not expose compute shaders and requires a canvas for rendering, while WebGPU offers greater flexibility and performance thanks to the ability to render to off-screen textures.

#GPU access

In JavaScript, the navigator.gpu object is the main entry point for WebGPU. If this object

is not defined, it means WebGPU is not available:

if (!navigator.gpu) { throw new Error('WebGPU not supported on this browser.');}Firefox currently supports WebGPU only in Nightly builds, while the Release and Beta versions do not yet include this feature.

A system may have multiple physical GPUs, such as laptops with a low-power and a high-performance graphics card.

Each GPU is represented by an adapter (GPUAdapter), which can be “requested” through the requestAdapter() method:

const adapter = await navigator.gpu.requestAdapter({ powerPreference: "high-performance"});

if (!adapter) { throw new Error('No appropriate GPUAdapter found.');}The high-performance setting for the powerPreference parameter suggests preferring the high-performance GPU.

The returned value can be null, for example if the hardware does not meet all the required features.

The adapter provides general information, limits, and supported optional features:

// General informationconsole.log(adapter.info.vendor);console.log(adapter.info.architecture);console.log(adapter.info.device);console.log(adapter.info.description);

// Limitsconsole.log(adapter.limits.maxTextureDimension2D);

// Featuresconsole.log(adapter.features);The WebGPU Report website shows all this information in detail.

Given an adapter, it is possible to request the associated device (GPUDevice). In WebGPU, code that

owns a device acts as if it were the sole user of the graphics card. Consequently, a device is the owner of

all the resources created from it (textures, etc.), which are released when the device is released.

A device acts as an “isolated context,” where resources are private and not accessible between different devices.

To obtain a device from the adapter, the requestDevice() method is used:

const device = await adapter.requestDevice({ requiredFeatures: [ "float32-filterable" ], requiredLimits: { // >= 1024 "maxTextureDimension2D": 1024 }});When requesting a device, you can specify in requiredFeatures the optional features you want to enable.

In requiredLimits, you define the minimum acceptable values for certain characteristics, such as maximum texture dimensions.

The keys of requiredLimits must correspond to those defined by GPUSupportedLimits. If the limits are not met,

an exception will be thrown.

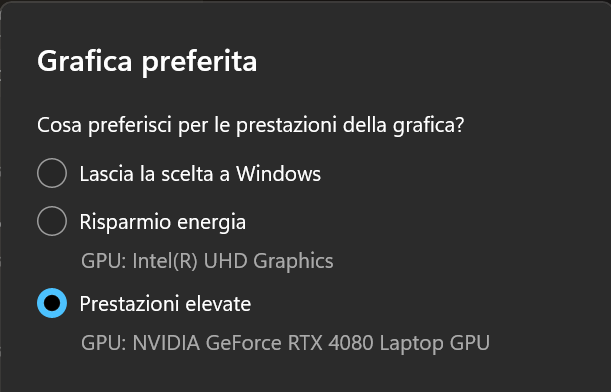

#Default GPU on Windows

On laptops with dual GPUs, the integrated card is usually

preferred for running the browser. This prevents access to the high-performance GPU, even when specifying

high-performance as the powerPreference in the adapter request. To change this setting, you need to open

the Windows settings (Win + I), navigate to “System > Display > Graphics”, add the browser

executable, and select the “High performance” option:

Selecting “high performance” as the default choice for the browser

#Canvas rendering

One of the most common uses of WebGPU is generating interactive graphics within a web page. This

typically happens with the support of the <canvas /> element. In the rest of the article, we will focus on this use case,

although WebGPU is not limited to on-screen rendering.

To begin, let’s assume we have a <canvas /> element defined in the HTML:

<canvas id="canvas" />The first step to integrate WebGPU with the canvas is to obtain a rendering context of type webgpu using the

getContext() method:

const canvas = document.getElementById("canvas")! as HTMLCanvasElement;const ctx = canvas.getContext('webgpu');if (!ctx) { throw new Error("Could not get 'webgpu' context from canvas!");}This step is similar to what happens with traditional canvas drawing APIs, which use the 2d context type instead.

Before use, the WebGPU context must be configured by specifying at least the texture format:

ctx.configure({ device, format: navigator.gpu.getPreferredCanvasFormat()});A texture is a pixel matrix, and its format specifies the binary encoding.

For canvas rendering, the getPreferredCanvasFormat() method returns the optimal format supported by the system,

which avoids potential inefficiencies related to encoding conversions.

#Texture formats

The WebGPU specification defines the possible texture formats through the GPUTextureFormat enum.

The choice of format determines how pixels are stored and interpreted, affecting color precision, memory usage, and performance.

Texture formats are primarily characterized by three factors: the number and order of components (or channels), the number of bits per channel, and the data type of each channel.

#Number and order of components

This aspect defines what information is contained in each pixel of the texture. The most common formats include:

- r: stores only the red component (Red). Often used for masks, depth maps, or scalar data.

- rg: stores the red (Red) and green (Green) components. Useful for storing UV coordinates or other two-component data.

- rgb: stores the red (Red), green (Green), and blue (Blue) components. Standard color representation.

- rgba: stores the red (Red), green (Green), blue (Blue), and alpha (Alpha) components. Includes transparency.

- bgra: similar to

rgba, but with the order of blue and red components reversed (Blue, Green, Red, Alpha). This format is often used for compatibility with specific platforms or graphics libraries.

#Bits per channel

The number of bits dedicated to each channel determines the precision with which that component can be represented. Typical values are 8, 16, or 32 bits. A higher number of bits allows for a more detailed representation of color or data, but also requires more memory. There are also “packed” formats where the number of bits varies between channels to optimize memory usage in specific scenarios.

#Data type

The data type defines how individual channel values are stored and interpreted:

- uint (Unsigned Integer): Unsigned integer. Values represent positive whole numbers.

- sint (Signed Integer): Signed integer. Values represent positive and negative whole numbers.

- float: Floating-point number. Allows representing fractional values, offering greater precision and a wider dynamic range. Used for complex calculations and high-precision rendering.

- unorm (Unsigned Normalized): Unsigned normalized integer. The integer value is converted to a floating-point number in the [0, 1] range before being used in shaders.

- snorm (Signed Normalized): Signed normalized integer. The integer value is converted to a floating-point number in the [-1, 1] range before being used in shaders. Useful for representing surface normals or other directional vectors.

#Color space

Some formats may have the -srgb suffix (e.g., rgba8unorm-srgb). This suffix indicates that the texture

stores colors in the sRGB color space. When a shader reads from or writes to a

texture of this type, gamma conversions between the sRGB (non-linear) space and the

linear space are automatically applied.

#Example formats

Let’s analyze some examples to better understand how these factors combine:

- r32float: A single-channel (r) texture where each pixel value is a 32-bit floating-point number (float). Suitable for storing high-precision scalar data.

- rgba8uint: A four-channel (rgba) texture where each component is represented by an 8-bit unsigned integer (uint). A common format for storing color images.

- rg16sint: A two-channel (rg) texture where each component is a 16-bit signed integer (sint). Useful for storing 2D vectors with both positive and negative values.

- bgra8unorm-srgb: A four-channel (bgra) texture where each component is an 8-bit unsigned normalized integer (unorm) and colors are stored in the sRGB color space. A common format for rendering output destined for canvas display.

The choice of format depends on the specific needs of the application, such as the required color precision, the amount of available memory, and the operations that will be performed on the texture within shaders.

#Canvas sizing

For a canvas, we can consider two distinct dimensions:

- The on-screen size of the element, defined via CSS.

- The size of the texture associated with the canvas, determined by the HTML

widthandheightattributes.

It is important that these two dimensions match, to avoid artifacts. For example, if the on-screen size is larger than the texture size, the result will be a blurry image, because the browser will upscale the texture.

One might think of solving the problem by simply setting the same values on the CSS and HTML sides:

<style> #canvas { width: 384px; height: 384px; }</style><canvas id="canvas" width="384" height="384" />However, this is not always correct, because a CSS pixel may not correspond to an actual screen pixel.

The window.devicePixelRatio property returns the number of real pixels that correspond to one CSS pixel.

To account for this discrepancy, the canvas size must be set via JavaScript:

<style> #canvas { width: 384px; height: 384px; }</style><canvas id="canvas" /><script> canvas.width = Math.round(384 * window.devicePixelRatio); canvas.height = Math.round(384 * window.devicePixelRatio);</script>Below is a widget showing your value for devicePixelRatio:

#Responsive canvas

If the canvas is responsive, its size changes must be monitored with a ResizeObserver:

const resizeObserver = new ResizeObserver(onResizeCallback);resizeObserver.observe( canvas, { box: 'content-box' });

function onResizeCallback([entry]: ResizeObserverEntry[]) { let width; let height; let dpr = window.devicePixelRatio; if (entry.devicePixelContentBoxSize) { // NOTE: Only this path gives the correct answer // The other 2 paths are an imperfect fallback // for browsers that don't provide anyway to do this width = entry.devicePixelContentBoxSize[0].inlineSize; height = entry.devicePixelContentBoxSize[0].blockSize; dpr = 1; // it's already in width and height } else if (entry.contentBoxSize) { if (entry.contentBoxSize[0]) { width = entry.contentBoxSize[0].inlineSize; height = entry.contentBoxSize[0].blockSize; } else { // legacy width = (entry.contentBoxSize as any).inlineSize; height = (entry.contentBoxSize as any).blockSize; } } else { // legacy width = entry.contentRect.width; height = entry.contentRect.height; }

const displayWidth = Math.round(width * dpr); const displayHeight = Math.round(height * dpr);

// Make the canvas the same size const canvas = entry.target as HTMLCanvasElement; canvas.width = displayWidth; canvas.height = displayHeight;}#Queues and commands

Interaction with the GPU mainly occurs in two phases:

- Creation of one or more command buffers.

- Submission of the buffers to a queue.

The queue is represented by the device.queue object, which provides the submit() method for sending

commands to the GPU:

device.queue.submit([ commandBuffer1, commandBuffer2, // ...]);It is important to remember that submit() only schedules the execution of commands, while the actual

processing by the GPU happens asynchronously.

#Creating command buffers

Command buffers are generated through encoders, which act as builders:

const encoder = device.createCommandEncoder();

// ... Build commands ...

// Build the command bufferconst commandBuffer = commandEncoder.finish();

// Schedule the command execution on the GPUdevice.queue.submit([ commandBuffer]);The encoder’s finish() method returns the actual command buffer, ready to be submitted.

#Render pass

A rendering operation on a texture is called a render pass. To begin a render pass, the

beginRenderPass() method is used, which returns a sub-builder dedicated to rendering operations:

const encoder = device.createCommandEncoder();

// Create a render passconst passEncoder = encoder.beginRenderPass({ colorAttachments: [{ view: ctx.getCurrentTexture().createView(), clearValue: { r: 1, g: 0, b: 0, a: 1 }, loadOp: 'clear', storeOp: 'store' }]});

// ...build render specific commands...

// Terminate the render passpassEncoder.end();

// Build the command bufferconst commands = encoder.finish();

// Schedule the command execution on the GPUdevice.queue.submit([ commands]);A render pass is initialized using an object called a descriptor, which contains several properties

needed to configure the operation. Among these, the colorAttachments property specifies the textures to render to.

Since colorAttachments is an array, it is possible to render to multiple textures simultaneously.

The most important properties of a color attachment are:

view: indicates the output texture. In the example, the texture associated with the canvas is set viactx.getCurrentTexture().createView().clearValue: represents the color used to clear the texture contents before rendering.loadOp: determines the operation to perform on the data already present in the texture.storeOp: establishes whether the data generated during the render pass should be stored in the output texture.

To keep existing data in the texture, simply set loadOp to load,

omitting the clearValue. Setting clear instead overwrites the existing data with the clearValue.

Finally, each render pass must be terminated with the end() command.

#Complete code

The complete code that clears a canvas using WebGPU is as follows:

<html lang="en">8 collapsed lines

<head><style> #canvas { width: 384px; height: 384px; }</style></head><body><h1>Hello WebGPU</h1><canvas id="canvas" /><script type="module"> const device = await getDevice(); const canvas = document.getElementById("canvas"); canvas.width = Math.round(384 * window.devicePixelRatio); canvas.height = Math.round(384 * window.devicePixelRatio); const ctx = getCanvasContext(device, canvas); device.queue.submit([ clearRenderPass(device, 1, 0, 0) ]);

async function getDevice() {18 collapsed lines

// Get the adapter and device if (!navigator.gpu) { throw new Error('WebGPU not supported on this browser.'); }

const adapter = await navigator.gpu.requestAdapter({ powerPreference: "high-performance" });

if (!adapter) { throw new Error('No appropriate GPUAdapter found.'); }

const device = await adapter.requestDevice(); if (!device) { throw new Error('Could not get the device!'); } return device; }

function getCanvasContext(device, canvas) {12 collapsed lines

// Get the WebGPU canvas context const ctx = canvas.getContext('webgpu'); if (!ctx) { throw new Error("Could not get 'webgpu' context from canvas!"); }

ctx.configure({ device, format: navigator.gpu.getPreferredCanvasFormat() });

return ctx; }

function clearRenderPass(device, r, g, b) { // Create an encoder const encoder = device.createCommandEncoder();

// Create a render pass const passEncoder = encoder.beginRenderPass({ colorAttachments: [{ view: ctx.getCurrentTexture().createView(), clearValue: { r, g, b, a: 1 }, loadOp: 'clear', storeOp: 'store' }] });

// Terminate the render pass passEncoder.end();

// Build the command buffer return encoder.finish(); }</script></body></html>The 01-hello branch of the GitHub repository contains the discussed

implementation.

#Conclusions and next steps

WebGPU represents an important step forward in the evolution of web graphics APIs, offering a modern and performant interface for GPU interaction.

In this article, we explored the fundamental concepts, from GPU access to the command queue-based workflow. In the next article, we will see how to build a render pipeline to draw geometry on screen.