Render pipeline

In the previous article, we saw how to configure a render pass to clear the contents of a texture with a fixed background color. In this article, we will see how to use render pipelines: objects that, attached to a render pass, allow drawing geometry.

#Geometric primitives

GPUs are fundamentally able to draw three types of primitives: points, lines, and triangles. Curves and complex shapes are approximated as combinations of the basic primitives.

Primitives are composed of vertices, each defined by their coordinates. Coordinates are expressed in a reference system called Normalized Device Coordinates (NDC). In this system, coordinates range from -1 to 1 for each dimension, with the origin at the center of the screen.

NDC coordinates are independent of the screen resolution and aspect ratio. The graphics card takes care of converting NDC coordinates into screen coordinates (or window coordinates), which are the actual device coordinates.

#Shaders

A shader is a program that runs on the GPU. There are two types of shaders for rendering: the vertex shader and the fragment shader. The vertex shader is a program that runs for each vertex of the primitives to be drawn. Its task is to provide the vertex coordinates in the NDC system.

The fragment shader, instead, is invoked for each pixel (called a fragment) that makes up the primitives. Its purpose is to compute the color of each pixel. Both vertex and fragment shaders are executed in parallel on vertices and pixels.

#WGSL language

Shaders are written in a language called WebGPU Shading Language (WGSL):

@vertexfn vs(@builtin(vertex_index) index: u32) -> @builtin(position) vec4f { let pos = array<vec2f, 3>( vec2f(-0.8, -0.8), vec2f(0.8, -0.8), vec2f(0, 0.8) );

return vec4f(pos[index], 0.0, 1.0);}The vertex shader is a function defined with the fn keyword and annotated with @vertex. In the example, the function vs

declares a parameter index of type u32, a 32-bit unsigned integer. The @builtin(vertex_index) annotation indicates that

the parameter represents the (zero-based) index of the vertex. The return type is vec4f, a 4-component single-precision vector.

The @builtin(position) annotation indicates that the return value represents the vertex position in Normalized Device Coordinates.

The method starts by declaring a variable pos of type array<vec2f, 3>, which contains the coordinates of 3 vertices in NDC. Unlike

JavaScript, in WGSL the let keyword is used to declare constants, while var is used for variables.

The vertex coordinates are obtained by indexing the pos array based on the index parameter, which is why the

vertex shader in this example is designed to draw a single triangle. Since the return type is vec4f,

the two-component vector pos[index] is expanded into a four-component vector by setting the third coordinate to 0 and the fourth to 1.

The third and fourth components come into play when rendering 3D geometry, but at this point they are not important.

@fragmentfn fs() -> @location(0) vec4f { return vec4f(1, 1, 0, 1);}The fragment shader is a function annotated with @fragment. In this simple example, no input parameters are used.

The return type depends on how many and which textures are written as output. In the example, we assume writing

to a single texture in floating-point format, so we use the vec4f type annotated with @location(0).

A suitable texture format for this type of value is, for example, rgba32float.

The fragment shader returns a constant color vec4f(1, 1, 0, 1), with the red and green channels at 1 and the blue channel at 0; the fourth component

represents transparency, where 1 is fully opaque.

#Compilation

WGSL is a high-level language, so it must be compiled before it can be executed by the GPU. The code is translated into an intermediate-level language, such as SPIR-V (Vulkan, OpenGL, OpenCL), Metal Shading Language (Apple), or HLSL (Microsoft), which in turn is compiled into machine code (ISA, or Instruction Set Architecture). Machine code is specific to each vendor, for example NVIDIA, AMD, or Intel.

export async function compileShader(device: GPUDevice, code: string) { // Create shader module device.pushErrorScope('validation');

const shaderModule = device.createShaderModule({ code });

const errors = await device.popErrorScope();

if (errors) { throw new Error('Could not compile shader!'); }

return shaderModule;}The compileShader function compiles the WGSL code using the createShaderModule() method.

In case of compilation errors, they are recorded in the validation error scope.

Through pushErrorScope and popErrorScope, it is possible to examine the validation scope to throw an exception in case of errors.

The returned shaderModule object is a reference to the compiled code, which can be attached to a render pipeline.

For developing shaders, it is convenient to create dedicated functions that return the code:

export class TrianglePass { static function shaderCode() { // language=WGSL return ` ```wgsl @vertex fn vs(@builtin(vertex_index) index: u32) -> @builtin(position) vec4f { let pos = array<vec2f, 3>( vec2f(-0.8, -0.8), vec2f(0.8, -0.8), vec2f(0, 0.8) );

return vec4f(pos[index], 0.0, 1.0); }

@fragment fn fs() -> @location(0) vec4f { return vec4f(1, 1, 0, 1); } ``` `; }}Compared to importing external files, this technique allows using JavaScript to its full extent for dynamically generating code. Common parts shared between multiple shaders can be factored out, and it is also possible to vary the code based on parameters, for example the output texture format.

#Rendering

To use shaders, it is necessary to create a render pipeline, which is mainly defined by:

- Topology: defines how to assemble vertices to form primitives.

- Vertex shader: function that transforms vertices.

- Fragment shader: function that computes pixel colors.

- Layout: defines how the data needed by the shaders is organized.

After generating the WGSL code and compiling the shaders, the render pipeline can be created using the

createRenderPipeline() method, as shown in the following example:

import { compileShader } from "@/webgpu/utils.ts";

export class TrianglePass { private readonly device: GPUDevice; private readonly pipeline: GPURenderPipeline;

static async create(device: GPUDevice, format: GPUTextureFormat) { const code = TrianglePass.shaderCode(); const module = await compileShader(device, code);

// Create render pipeline const pipeline = device.createRenderPipeline({ layout: "auto", vertex: { module, entryPoint: "vs" }, fragment: { module, targets: [{ format }], }, primitive: { topology: 'triangle-list' } });

return new TrianglePass(device, pipeline); }

private constructor( device: GPUDevice, pipeline: GPURenderPipeline ) { this.device = device; this.pipeline = pipeline; }

static shaderCode() { // ... }}Let’s now analyze the different options used when creating the pipeline: in the simplest cases, for the layout property

you can use the string auto, letting WebGPU automatically choose an adequate structure.

The vertex and fragment properties define module, i.e. the compiled WGSL code, and optionally an entryPoint,

which is the name of the specific function to invoke. This feature is particularly useful when the same module contains

more than one function annotated with @vertex or @fragment. The fragment property also defines a targets array containing

at least one object that specifies the output texture format.

The triangle-list topology indicates forming one triangle for every 3 vertices. Other topologies are point-list (1 point per vertex), line-list

(1 segment every 2 vertices), line-strip (after the first vertex, one segment per vertex), and triangle-strip (after the first two vertices, one triangle per vertex).

#Using the render pipeline

As seen previously, to perform rendering it is necessary to create a render pass:

export class TrianglePass { private readonly device: GPUDevice; private readonly pipeline: GPURenderPipeline;

// ...

render(texture: GPUTexture) { // Create root encoder const commandEncoder = this.device.createCommandEncoder();

// Create a render pass const passEncoder = commandEncoder.beginRenderPass({ colorAttachments: [{ view: texture.createView(), loadOp: 'load', storeOp: 'store' }] }); passEncoder.setPipeline(this.pipeline); passEncoder.draw(3);

// Terminate the render pass passEncoder.end();

// Build the command buffer return commandEncoder.finish(); }}The render pipeline is attached to the render pass via the setPipeline() method; there are several methods for drawing geometry,

but the simplest is draw(), which takes the number of vertices to draw as an argument. After that, the render pass can be terminated,

then the command buffer is created for submission to the rendering queue.

#Results

The result of all these steps is a yellow triangle drawn on the canvas. 🎉

A yellow triangle on a black background.

To summarize, the initialization phase involves:

- Compiling the shaders with

createShaderModule(). - Creating a render pipeline with

createRenderPipeline()specifying the vertex shader, fragment shader, output texture format, and desired topology.

Regarding rendering, we have:

- Creating an encoder via

createCommandEncoder(). - Starting the render pass through

beginRenderPass()with the output texture configuration. - Activating the render pipeline via

setPipeline()followed by drawing geometry with thedraw()method. - Concluding the render pass by calling

end()and generating the command buffer withfinish(). - Submitting the command buffer to the rendering queue with

submit().

You can find the complete source code for this example on GitHub.

#Chrome and color management

While writing this article, I encountered a strange problem: the triangle drawn on the canvas was not exactly yellow (#FFFF00) as expected, but a slightly different color (#FBFF25). After some research, I discovered that the cause is Chrome’s color management. The browser automatically applies a color correction, altering the final result.

To solve this problem, you can visit chrome://flags and set the Force color profile option to

sRGB instead of Default. Mozilla Firefox, on the other hand, does not exhibit this behavior: colors are

managed more consistently with expectations.

#Inter-stage variables

The vertex shader, in addition to the position, can return additional values that are transmitted to the fragment shader.

These additional values are called inter-stage variables. In the 02-shaders-interstage branch of the

GitHub repository, the TrianglePass class is

updated to add a distinct color to each vertex:

export class TrianglePass { static function shaderCode() { // language=WGSL return ` ```wgsl struct VertexOutput { @builtin(position) pos: vec4f, @location(0) color: vec4f }

@vertex fn vs(@builtin(vertex_index) index: u32) -> VertexOutput { let pos = array<vec2f, 3>( vec2f(-0.8, -0.8), vec2f(0.8, -0.8), vec2f(0, 0.8) ); let col = array<vec4f, 3>( vec4f(1, 0, 0, 1), vec4f(0, 1, 0, 1), vec4f(0, 0, 1, 1) );

var vertex: VertexOutput; vertex.pos = vec4f(pos[index], 0.0, 1.0); vertex.color = col[index]; return vertex; }

struct FragmentInput { @location(0) color: vec4f }

@fragment fn fs(fragment: FragmentInput) -> @location(0) vec4f { return fragment.color; } ``` `; }}The vertex shader now returns a structure called VertexOutput, which includes both the position and the vertex color.

On the other side, the fragment shader receives a structure called FragmentInput that contains the pixel color.

A fundamental aspect to understand is that the linking of inter-stage variables between the vertex and fragment shader

occurs through @location() annotations. Specifically, the @location(0) annotation associates (binds)

the inter-stage variable color defined in the vertex shader with the one used in the fragment shader. This linking

is based exclusively on the location number and not on the variable names.

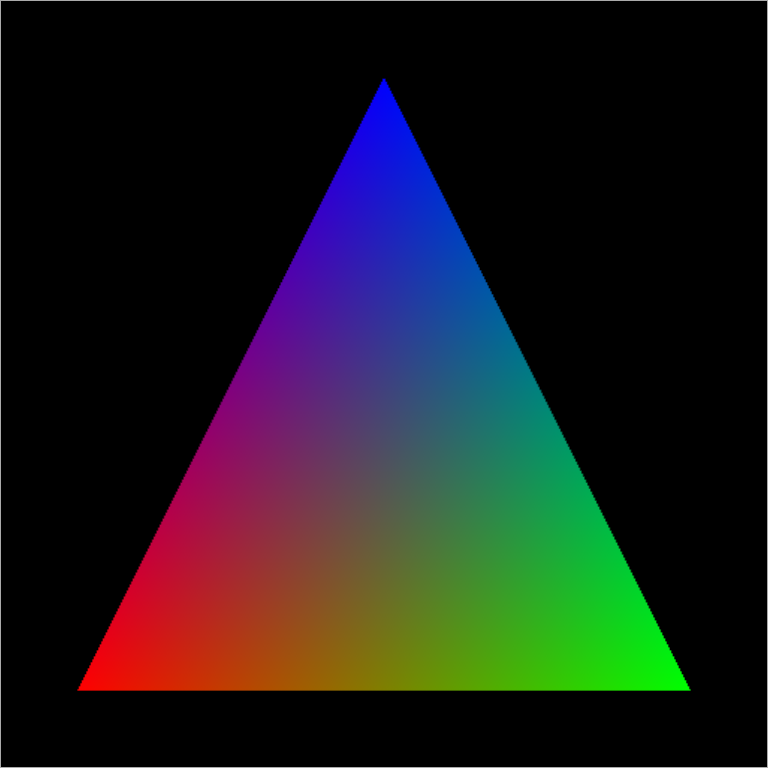

The final result is the following:

A triangle on a black background with a red, green, and blue vertex. The interior of the triangle is a color gradient resulting from the bilinear interpolation of the vertex colors.

Despite the vertex shader defining only 3 colors (one for each vertex), the interior of the triangle is filled with a color gradient. This happens because inter-stage variables, by default, are linearly interpolated between vertices, thus generating the gradient.

It is possible to change the interpolation mode through the @interpolate annotation, which accepts the

values flat, linear, and perspective (the default value):

struct VertexOutput { @builtin(position) pos: vec4f, @location(0) @interpolate(linear) color: vec4f}#Linear interpolation

Linear interpolation is a way to estimate an intermediate value between two known values. Imagine a 1D function that has a value at and a value at . Linear interpolation allows computing the function’s value for any between and , by drawing a straight line between the two values. This same idea naturally extends to more dimensions and to different types of attributes, including colors.

#Conclusions and next steps

In this article, we saw how to draw geometry with WebGPU, from primitives to creating a render pipeline. We introduced the concepts of vertex and fragment shaders, applying them to draw a colored triangle.

In the next article, we will see how to manipulate geometry through geometric transformations.